How To Become A Data Scientist - A Complete Guide on Data Science Roadmap For 2023

What Is a Data Scientist?

A data scientist is a professional who uses data to gain insights and solve complex problems using a combination of statistical analysis, programming, and machine learning techniques.

What Does a Data Scientist Do?

A data scientist collects, cleans, and analyzes large and complex data sets using statistical methods and machine learning algorithms to gain insights and make data-driven decisions.

They work with various types of data, such as structured, unstructured, and semi-structured, from multiple sources such as sensors, social media, and enterprise systems.

Data scientists develop predictive models, create visualizations, and communicate their findings to stakeholders to help them make informed decisions.

They use programming languages such as Python, R, and SQL to perform data analysis and build predictive models that can identify patterns and trends in the data.

In addition to analysis and modeling, data scientists may design and implement experiments to test hypotheses and improve data collection processes.

Overall, a data scientist's role is to extract actionable insights from data to help organizations solve complex problems and make data-driven decisions.

Popular Roles Within Data Science

- Data Scientist

- Data Engineer

- Data Analyst

- Machine Learning Engineer

- Database Administrator

- Data Architect

- Business Analyst

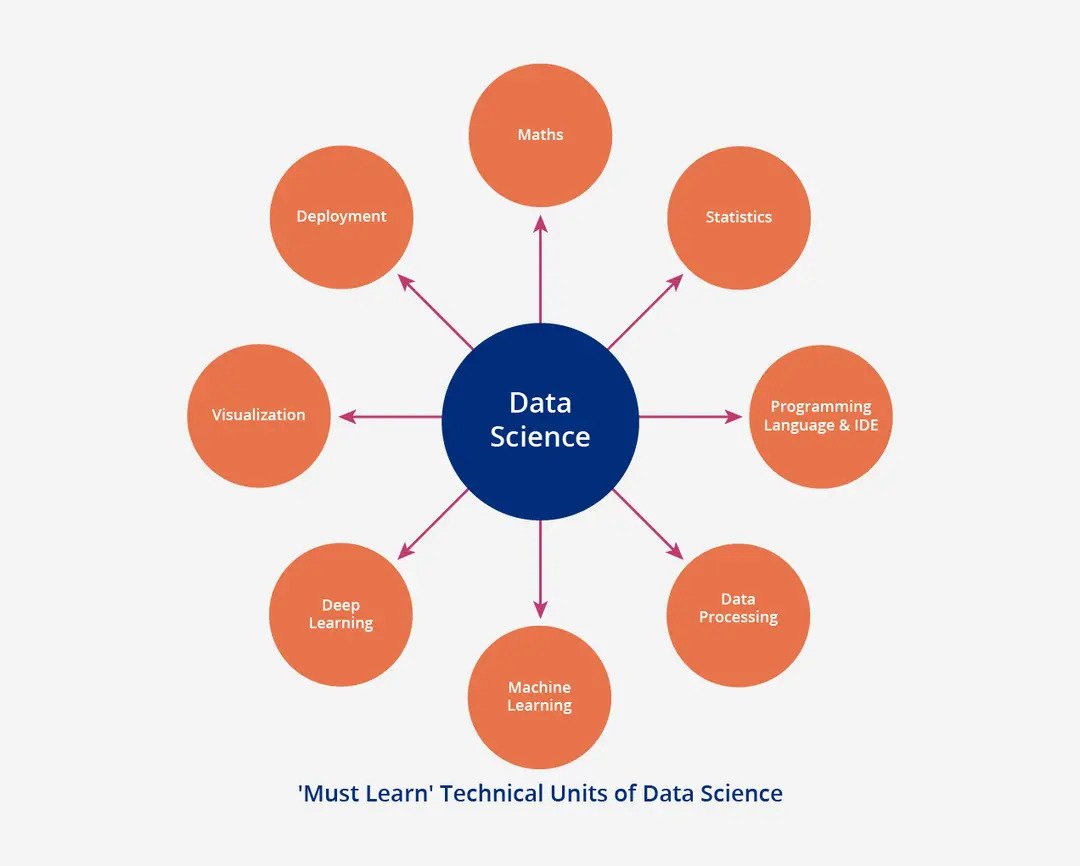

Master Data Skills

Applied Statistics and Mathematics

Mathematics

- Linear Algebra

- Probability

- Calculuss

Statistics

- Probability theory

- Descriptive Statistics

- Inferential Statistics

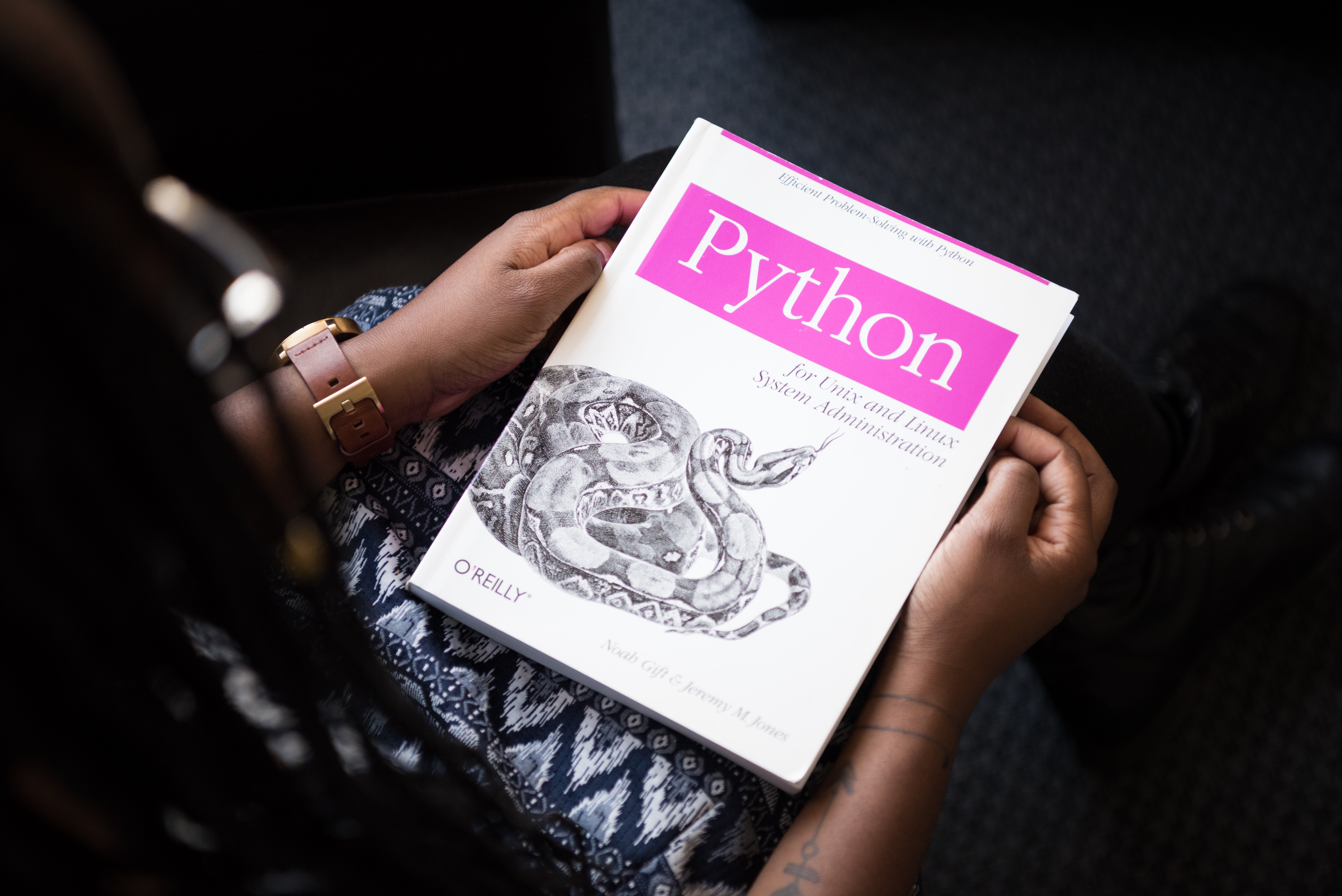

Learn Python

- Data Structures (Various Data Types,Lists, Tuples, Dictionary, Array, Sets, Matrices, Vectors,, etc.)

- Define and Writing User Defined Functions

- Different kinds of Loops and conditional statements such as If, else,, etc.

- Searching and Sorting algorithms

- Basic programming skills (fundamentals of programming such as variables, data types, control structures, functions, and classes.)

Familiar with Python Libraries

- NumPy will help you to perform numerical operations on data. With the help of NumPy, you can convert any kind of data into numbers. Sometimes data is not in a numeric form, so we need to use NumPy to convert data into numbers.

- Pandas is an open-source data analysis and manipulation tool. With the help of pandas, you can work with data frames. Dataframes are nothing but similar to Excel files.

- Matplotlib allows you to draw a graph and charts of your findings. Sometimes it’s difficult to understand the result in tabular form. That’s why converting the results into a graph is important. And for that, Matplotlib will help you.

- Scikit-Learn is one of the most popular Machine Learning Libraries in Python. Scikit-Learn has various machine learning algorithms and modules for pre-processing, cross-validation, etc.

- Seaborn is a Python data visualization library based on Matplotlib. It provides a high-level interface for creating informative and attractive statistical graphics.

Machine Learning

- Supervised Learning

- Unsupervised Learning

- Reinforcement Learning

- Deep Learning

- Model Evaluation and Selection

SQL

- Basics of relational databases

- Basic Queries: SELECT, WHERE LIKE, DISTINCT, BETWEEN, GROUP BY

- Advanced Queries: CTE, Subqueries, Window Functions

- Joins: Left, Right, Inner, Full

- Stored procedures and functions

Deep Learning

- Neural network architecture: Understanding the different types of neural network architectures (such as convolutional neural networks, recurrent neural networks, and transformer models) and when to use each one is important for building effective deep learning models.

- Optimization techniques: Knowing how to optimize the weights and biases of neural networks to minimize loss and improve accuracy is critical for training deep learning models. Techniques such as stochastic gradient descent, Adam, and Adagrad are commonly used.

- Regularization: Overfitting is a common problem in deep learning, so knowing how to use regularization techniques (such as dropout, L1/L2 regularization, and early stopping) to prevent overfitting is important.

- Data preprocessing: Preprocessing data is a crucial step in building effective deep learning models. Techniques such as normalization, one-hot encoding, and data augmentation can be used to prepare data for deep learning models.

- Transfer learning: Transfer learning involves using pre-trained neural network models and adapting them for a new task. This can be a powerful technique for data scientists who need to build models quickly and with limited data.